Overview

Role

Lead Product Designer & Acting Product Manager

Platform

Power Apps Canvas, Azure OpenAI, Chat RAG Architecture

Mission

Design and launch an internal GenAI chat RAG platform that allows professionals to analyze complex financial and legal documents instantly while maintaining trust, traceability, and accuracy.

Outcome

Reduced manual document review time by hundreds of hours and enabled faster, more confident decision-making through transparent AI-powered document analysis.

The Problem

Investment professionals needed to extract critical information from documents hundreds of pages long.

Manual searching through investment agreements and prospectuses took hours

Critical insights buried in dense legal and financial language

Existing AI tools not trusted due to hallucinations and lack of citations

Zero tolerance for incorrect information in regulated financial environments

Design Challenge

Design a GenAI system that delivers speed without sacrificing trust, traceability, or accuracy.

This became a trust and explainability design problem, not just an AI interface. Designing for regulated environments required balancing speed, transparency, accuracy, and usability in every interaction.

Key Features

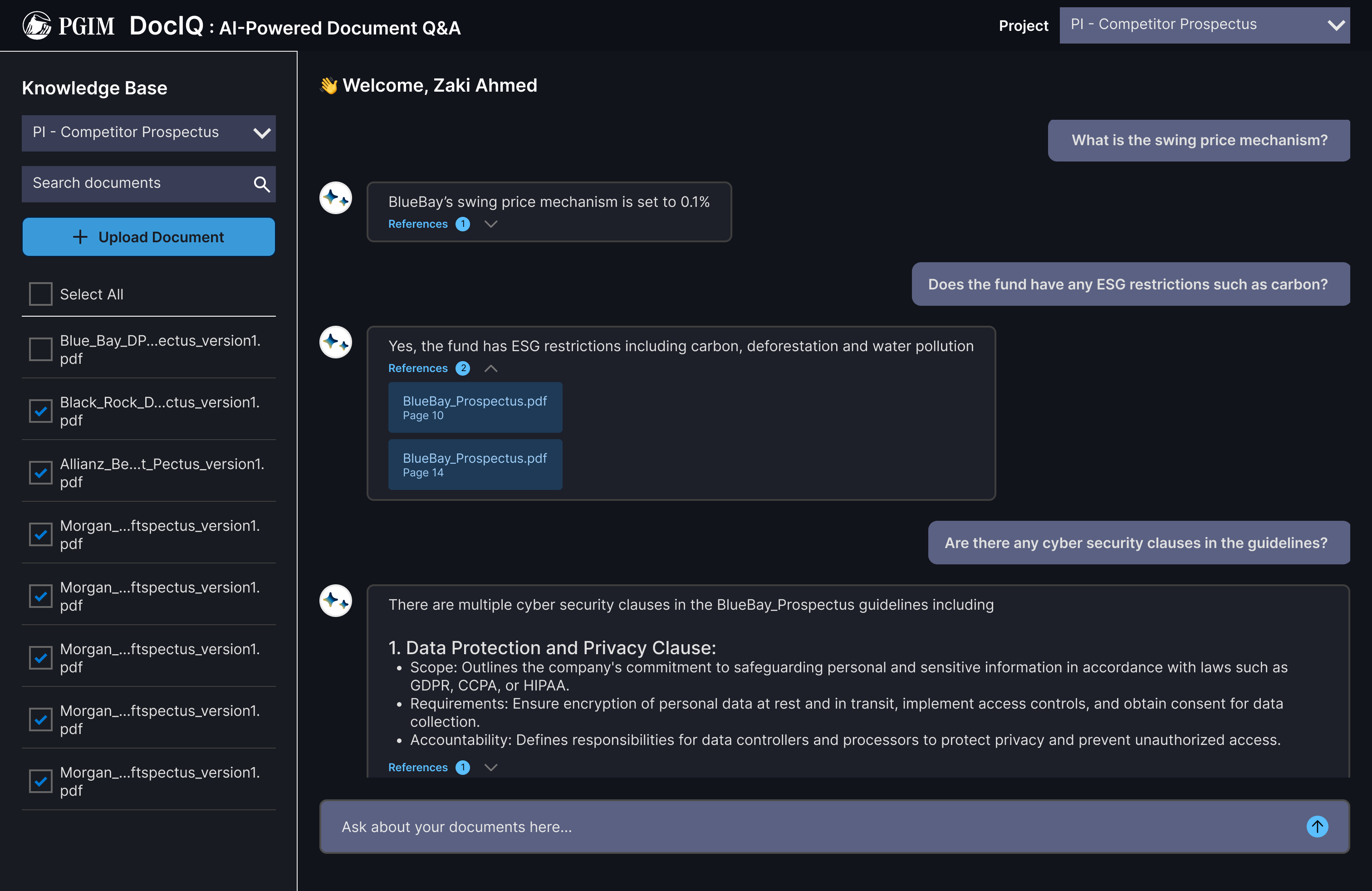

Chat-Based Document Intelligence

Users ask natural language questions across one or multiple documents and receive grounded answers with references. Transforms document review from manual searching to conversational analysis.

Eliminated hours of manual document searching per review cycle.

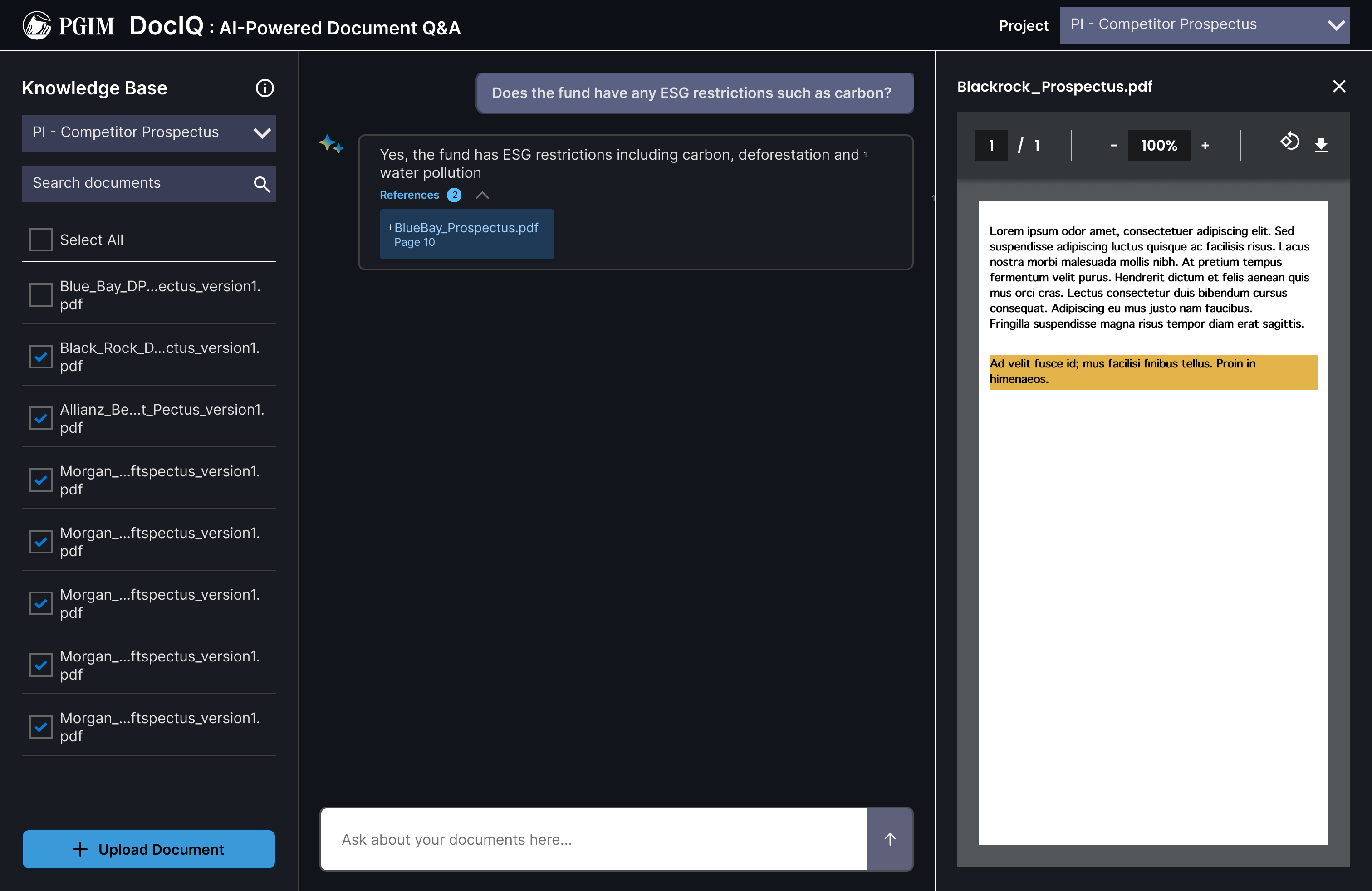

Traceable AI Responses & PDF Source Linking

Every AI response includes reference chunks and links directly to the source document. Users can jump from an answer to highlighted text inside the original PDF.

Builds trust and reduces hallucination risk through verifiable citations.

Guided Prompting with Standard & Favorite Questions

Users can select recommended questions or save frequently used prompts to speed up workflows. Reduces prompt friction and helps users get value immediately.

Lowered the barrier to entry for users unfamiliar with AI prompting.

Multi-Source Document Ingestion

Admins can ingest documents from enterprise sources into the AI knowledge base. Supports scalable document analysis across teams and departments.

Enabled organization-wide adoption without manual document uploads.

Designing for AI Trust

Users expect 100% accuracy from AI. LLMs can hallucinate. Trust must be designed intentionally.

Reference Chunking

Every response is grounded in specific document passages, making answers verifiable.

Source Linking

Direct links from AI answers to exact locations in source PDFs for instant verification.

Transparent Behavior

The AI communicates what it knows, what it doesn't, and where its answers come from.

Lessons Learned

AI & Technical

What building with LLMs taught us about designing AI products.

Tokens & Embeddings

Understanding how LLMs process text was essential to designing effective prompting flows and managing user expectations.

Hallucination Behavior

Learning when and why LLMs fabricate information shaped every design decision around trust and transparency.

System Message Design

Prompt engineering and system message architecture became a core UX design skill for controlling AI behavior.

Product & UX

Key insights from shipping an AI product in a regulated environment.

Users Expect Certainty

Users treat AI like a search engine. When it's wrong, trust breaks instantly. Design must account for this expectation.

Transparency Drives Adoption

Showing sources and confidence levels increased user willingness to rely on AI outputs in their workflows.

Platform Constraints

Working within the boundaries of enterprise tooling.

Power Apps at Its Limits

Power Apps enabled rapid deployment but introduced scaling and performance constraints we had to design around.

Impact

Hundreds of Hours Saved

Dramatically reduced manual document review time across investment teams.

Faster Analysis Speed

Professionals could extract key insights in minutes instead of hours of manual reading.

Safe GenAI Adoption

Established a trusted, internal GenAI workflow that became the model for future AI initiatives.

New Workflow Created

Introduced a fundamentally new way for teams to interact with and extract value from their document library.

Personal Reflection

"This project required me to wear two hats: product manager and product designer. I learned how LLMs work from a backend perspective, from tokenization and embeddings to retrieval-augmented generation. Understanding the limitations of AI and when hallucinations occur wasn't just technical knowledge; it became the foundation for every design decision. I designed transparency mechanisms to build trust, pushed Power Apps to its absolute limits, and delivered real value despite significant platform constraints. This experience fundamentally changed how I approach AI product design: start with trust, design for uncertainty, and never let the technology lead the user experience."